TMS Cloud Migration Stabilization: The 60-Day Post-Go-Live Protocol That Prevents 80% of Operational Failures

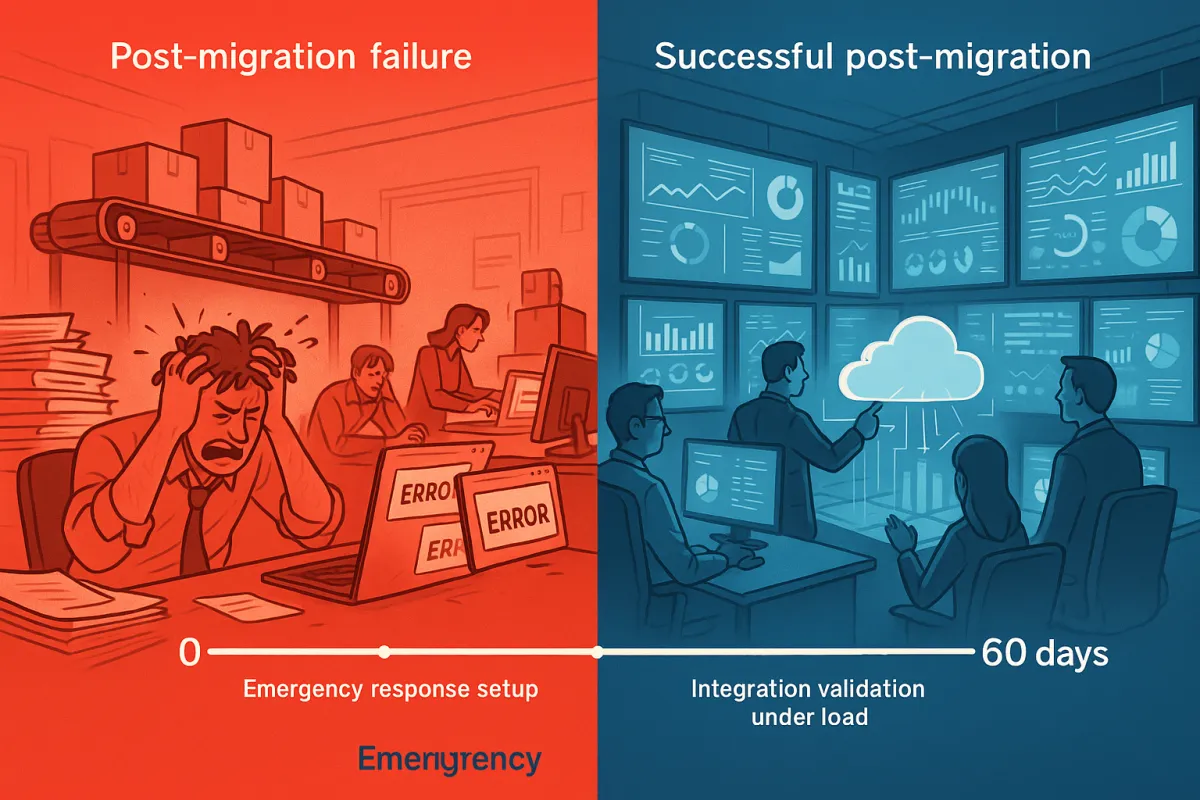

Too many companies botch migration because they're in a hurry, and it can cause significant problems. Without effective change management, businesses may experience resistance from employees, operational disruptions, and a decrease in productivity. Your TMS cloud migration went live three weeks ago. Users are logged in, carriers are connected, and shipments are flowing. You're tempted to declare victory and move on to the next project.

Don't.

A TMS project does not end with the go-live date. It is an ongoing process of continuous improvement that requires regular maintenance, monitoring, and optimization. The next 60 days determine whether your migration becomes a success story or joins the 70% that face operational setbacks. Here's the protocol that prevents most post-migration failures.

Why Cloud TMS Migrations Stumble After Go-Live

Post-migration testing is critical to ensure everything is functioning correctly. Post-migration, a retail business might discover its inventory system no longer communicates correctly with its customer database due to unforeseen compatibility issues. The technical implementation might be flawless, but that's only half the battle.

Testing and training issues can result in poor performance, user dissatisfaction, and operational disruptions. I've seen perfectly configured TMS platforms fail because operations teams skipped the stabilization protocol. Users revert to spreadsheets. Carrier integrations start timing out. Exception handling breaks down under real-world load.

The pattern is predictable: The cloud environment's performance might differ from the on-premises setup. Identifying bottlenecks, latency issues, or other performance-related challenges during testing is crucial to ensure smooth operation post-migration. Your testing scenarios covered happy paths. Real operations throw everything else at your system.

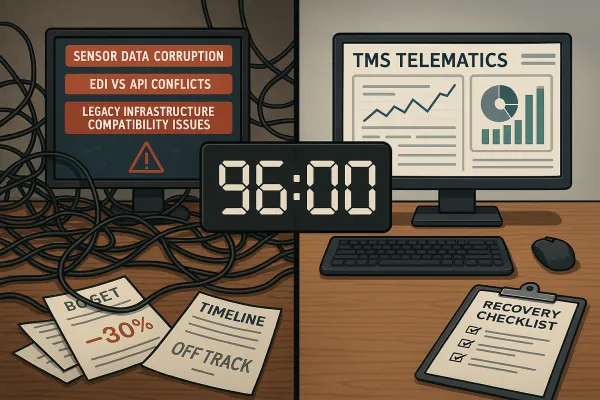

Here's what catches most teams off guard:

- Integration drift: APIs that worked in testing start failing under production volume

- User behavior gaps: Training scenarios don't match actual workflows

- Performance degradation: Response times slow down with concurrent users

- Exception overload: Edge cases that rarely appeared in testing become daily problems

The 60-Day Critical Window: Your Stability Baseline

The first 60 days establish your operational baseline. After everything is confirmed to be working correctly, it's time to monitor the systems for any post-migration issues and make necessary adjustments. A successful go-live example could be an e-commerce business that starts to see faster page loading times and improved customer experiences. But improvements only stick if you actively manage the transition.

During this window, you're collecting three types of data:

Performance metrics: Response times, throughput, error rates, system availability

User behavior patterns: Login frequency, feature adoption, support ticket categories

Business impact indicators: Shipping accuracy, carrier performance, cost per shipment

Track these metrics daily for the first two weeks, then weekly through day 60. You're looking for trends, not perfect numbers. A 10% increase in average response time might be acceptable if throughput improves by 30%.

Week 1-2: Emergency Response Setup

Your first priority is hypercare support. It's critical to appoint a project lead who understands the magnitude of this transition and is committed to undertaking the rollout with precision. But that project lead needs backup.

Set up these support channels immediately:

Dedicated hotline: Separate from regular IT support. Staff it with people who know the migration details.

Escalation matrix: Define what gets escalated to vendor support versus handled internally. Document response times for each category.

Daily standup meetings: 15-minute check-ins with operations supervisors. What broke? What's working? What needs attention?

Quick-win configurations make a difference too. Enable detailed logging for the first month. Set up monitoring alerts for key processes like label generation, carrier API calls, and user authentication. Configure automated backups with shorter intervals than normal.

I've seen teams using platforms like Blue Yonder, Cargoson, or nShift succeed faster when they establish these protocols on day one. The vendors with better support infrastructure help, but your internal response capability matters more.

Week 3-4: Integration Validation Under Load

Another critical aspect of a TMS project is the data and integration. It also involves designing and testing the integration between your TMS and your other applications, such as your ERP, your bank accounts, your payment platforms, and your reporting tools.

Now you validate integrations under real-world conditions. Run these health checks weekly:

API response monitoring: Track response times for carrier APIs, ERP connections, and third-party services. Set alerts at 150% of baseline performance.

Data flow validation: Verify order imports, shipment updates, and tracking data are flowing correctly. Check for dropped records or delayed processing.

Error handling verification: Intentionally trigger error conditions. Does your system handle carrier API timeouts gracefully? What happens when ERP sends malformed data?

Document everything that breaks. The pattern of failures tells you which integrations need strengthening. Common issues include webhook timeouts, certificate renewal problems, and rate limiting on carrier APIs.

Week 5-8: User Adoption Optimization

User understanding is critical for leveraging your TMS's full functionality. Establish training programs to help your people learn beyond the basics and push for a higher ROI generated by new cloud-based functions and features.

By week 5, usage patterns emerge. Some users embrace the new system. Others find workarounds that bypass it entirely. Both behaviors provide valuable data.

Analyze user activity logs to identify:

- Features that get ignored (training gap or poor UX?)

- Processes that take longer than expected (workflow optimization needed?)

- Functions where users frequently ask for help (documentation or training issue?)

Run focused training sessions based on actual usage gaps. If users struggle with rate shopping, don't retrain the entire system. Focus on carrier selection workflows. If exception handling trips people up, create job aids for common scenarios.

Manhattan Active, Cargoson, and Oracle TM all have different user experience philosophies. Tailor your training to match how your chosen platform actually works, not how you think it should work.

Days 30-60: Advanced Stabilization

This involves keeping your system up to date with the latest features, patches, and upgrades; reviewing your system performance, usage, and feedback; identifying and resolving any issues or gaps; and exploring new opportunities to enhance your system functionality, efficiency, and value.

After 30 days, shift focus from incident response to optimization. You have enough baseline data to make informed improvements.

Advanced reporting setup: Configure dashboards that track business metrics, not just system metrics. Cost per shipment. On-time delivery rates. Carrier performance scores. These drive behavior better than system uptime percentages.

Automation configuration: Enable workflow automation features you couldn't risk during the first month. Auto-routing rules. Exception handling triggers. Notification templates.

Cost optimization: Managing costs during the transition and post-migration phases can be a significant challenge. This is often due to factors such as unexpected expenses during the migration, changes in operational costs, and the costs associated with training staff to use new cloud-based tools. Review your cloud resource usage. Are you over-provisioned? Under-utilized?

This phase requires coordination between operations, IT, and finance teams. The goal isn't perfection. It's sustainable improvement.

Common Post-Migration Failures & 15-Minute Fixes

Some problems appear repeatedly across different TMS platforms. Here are the quick fixes that solve 80% of common issues:

Credential rotation problems: Set up automated certificate renewal 30 days before expiration. Test the renewal process quarterly. Document the manual override procedure.

Webhook backlogs: Configure dead letter queues for failed webhook deliveries. Set up monitoring alerts when queue depth exceeds normal levels. Create a manual replay process for critical events.

Label generation failures: Enable printer failover for critical workstations. Cache label templates locally. Set up backup label generation through carrier websites when APIs fail.

Performance degradation: Monitor database query performance. Enable connection pooling if not already configured. Set up automated cleanup for old transaction logs.

User authentication issues: Configure session timeout warnings. Enable password reset self-service. Set up backup authentication methods for critical users.

These fixes work across Transporeon, Alpega, Cargoson, and other cloud TMS platforms. The implementation details vary, but the concepts remain consistent.

Your 60-Day Stabilization Checklist

Here's your daily and weekly action plan:

Daily (Days 1-14):

- Check system performance dashboard

- Review overnight processing logs

- Monitor support ticket queue

- Verify critical integrations are responding

- Hold 15-minute operations standup

Weekly (Days 15-60):

- Analyze user activity reports

- Review exception handling effectiveness

- Test backup procedures

- Update documentation based on discovered issues

- Conduct training sessions for identified gaps

End of week checkpoints:

- Week 2: Hypercare effectiveness assessment

- Week 4: Integration stability review

- Week 6: User adoption analysis

- Week 8: Performance baseline establishment

Key metrics to track throughout:

- System uptime percentage

- Average response time for key functions

- User login frequency and session duration

- Support ticket volume by category

- Integration error rates

- Business process completion rates

You've taken the time to create a migration plan; now follow it! While flexibility is necessary for solving problems and overcoming obstacles in real-time, stick to a well-defined process for integrating your new TMS into operations. But remember that stabilization isn't just about following the plan. It's about adapting based on what you learn.

Your 60-day stabilization protocol isn't a one-time effort. Use it as the template for future system changes, major updates, or additional module rollouts. The discipline you build during these first 60 days becomes the foundation for long-term operational excellence.

Most TMS cloud migrations that stumble do so because teams treat go-live as the finish line. The teams that succeed treat it as mile marker one in a longer journey toward operational maturity.