Carrier API Integration Meltdowns: The 2-Hour Emergency Protocol That Prevents 90% of TMS Connectivity Disasters

Picture Tuesday morning at 8:47 AM. Your TMS dashboard shows green status across all carriers, but somehow UPS shipments aren't moving. Unannounced carrier API version updates cost enterprises massive resources per hour in operational disruption when no abstraction layer exists. Carriers, including UPS, FedEx, and USPS, have accelerated their API release cycles heading into 2025 and 2026, with migration windows shrinking as the number of affected integrations per enterprise grows.

You know this scenario because you've lived it. A degraded carrier API during peak season can cost over $100,000 per hour in fulfillment disruption. Your operations team scrambles between the TMS interface, carrier websites, and emergency phone calls while customer shipments pile up in digital limbo.

The 2026 reality hits different than previous years. When these systems connect only through batch synchronization or loosely coupled APIs, execution constraints don't reach planning in time to influence decisions. Your morning optimization runs completed hours ago, but the carrier integration that just failed is holding 200 shipments hostage.

The Hidden Cost of API Integration Failures in Modern TMS Operations

For enterprise teams managing thousands of shipments daily, this creates a perfect storm: forced migrations under hard deadlines while dealing with the new reality of aggressive rate limiting across all major carriers. The operational disruption goes beyond simple downtime. When your primary carrier API fails during peak hours, the cascading effects touch every part of your logistics operation.

Your planning systems allocated shipments based on rate and service commitments that no longer exist in real-time. Customer promises made yesterday become impossible to keep today. The failure pattern this creates shows up as planning-execution mismatches, sending drivers to capacity-constrained depots, reactive carrier management triggered hours before cutoff, and cost leakage accumulating across point-to-point connections no single team owns.

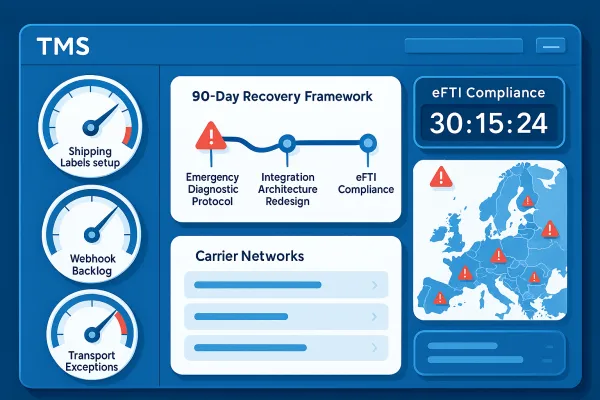

Modern carrier API management operates under compressed windows that leave zero margin for error. USPS Web Tools shut down on January 25, 2026, and FedEx SOAP endpoints retire on June 1, 2026. These weren't gradual transitions with lengthy migration periods. UPS migrated to OAuth 2.0 in August 2025. By February 3rd, 73% of integration teams reported production authentication failures.

The Anatomy of API Integration Cascade Failures

Integration strategies that work perfectly in testing environments collapse under production load in predictable ways. An enterprise operating across ten carriers, two warehouse management systems, and three OMS instances can accumulate 25 or more point-to-point integrations, each with its own authentication logic, error handling, schema mapping, and maintenance dependency.

The maintenance overhead compounds faster than most teams anticipate. After a new carrier is added, a new integration is built. If a carrier updates its schema, that specific integration is patched. No single team owns allocation logic across the network. The coordination overhead compounds with every addition.

Here's the operational reality: A dispatcher discovers at 7 AM that a carrier has suspended service to a zone after manifests have already been generated. A warehouse flags a capacity constraint after orders have been committed to a specific vehicle load. Your TMS built for optimization becomes a liability when real-time constraints can't reach the planning layer fast enough to matter.

The 2-Hour Emergency Response Framework

When carrier API integration failures hit, you need a systematic response that moves from containment to diagnosis to recovery. The teams that recover fastest follow a structured protocol that assumes failure modes will occur, not if they'll happen.

Phase 1 (0-15 minutes): Immediate Triage and Containment

Start with external validation before diving into your own systems. Check the carrier's API status page immediately - UPS provides real-time API health tracking to stay up to date on issues such as network or system outages, scheduled maintenance, and resolved issues. FedEx and DHL have similar dashboards. If their status shows operational, the problem lies within your integration stack.

Activate backup systems immediately. Your TMS should support multiple carrier integrations for exactly this scenario. Switch to secondary carriers for new shipments. If UPS is down, route urgent shipments through FedEx or regional LTL carriers. Most TMS platforms like Cargoson, MercuryGate, or Descartes support this failover automatically.

Implement manual verification procedures for time-sensitive shipments. Use manual rate lookup for time-sensitive quotes. Keep carrier rate sheets accessible for common lanes. Yes, it's slower than API rates, but it keeps business moving. Document every manual intervention to prevent duplicate shipments when the primary integration comes back online.

Communicate proactively with affected stakeholders. Send updates about potential delays before they call you. Most customers appreciate transparency over silence. Set realistic expectations about restoration timelines based on the diagnostic phase that follows.

Phase 2 (15-60 minutes): Root Cause Identification

Follow a systematic diagnostic sequence that addresses the most common failure modes first. The root causes vary: authentication token expiry, carrier-side infrastructure issues, API rate limits exceeded, or network connectivity problems.

Check authentication credentials first. Authentication tokens first. Most API failures trace back to expired credentials or OAuth configuration issues. UPS implemented an OAuth 2.0 security model for all APIs in 2024, requiring clients to implement the OAuth security model with bearer tokens by June 3, 2024. Many companies discovered their integrations broke overnight when the old access key system was deprecated.

Validate rate limiting and quota consumption. USPS's new APIs enforce strict rate limits of approximately 60 requests per hour, down from roughly 6,000 requests per minute without throttling in the legacy system. Check your current consumption against established limits and identify if you're hitting undocumented throttling.

Test alternative integration paths while diagnosis continues. Even though APIs are technically superior to EDI, much of the logistics industry still relies on EDI, particularly smaller companies that don't have the IT resources for full-scale API rollout. Your TMS might support both API and EDI connections to the same carrier.

Phase 3 (60-120 minutes): Service Restoration

Implement restoration procedures systematically, testing each component before moving to the next. Start with the simplest fixes: credential refresh, connection pooling adjustments, and timeout configuration changes.

Document everything for post-mortem analysis. Document everything for post-mortem analysis. Failed authentication attempts, error codes, and timeline details help prevent recurrence. Your incident documentation becomes the foundation for preventing similar failures and improving detection systems.

Validate restoration with controlled traffic before returning to full operation. Process a small batch of shipments through the restored integration and verify that all downstream systems receive expected data before routing full volume through the recovered API connection.

Prevention Architecture: Building Resilient Integration Systems

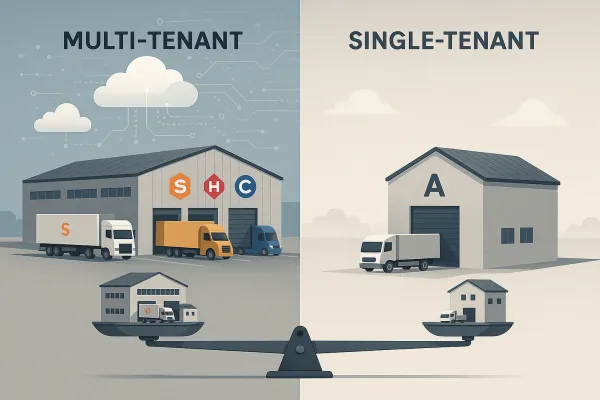

The enterprises that survive 2026's integration challenges aren't just better at fixing problems — they're building systems that fail gracefully and recover automatically. Hub-and-spoke versus point-to-point integration strategies create fundamentally different operational outcomes. Hub-and-spoke architectures centralize data transformation and business logic, simplifying compliance management and reducing maintenance overhead. Point-to-point connections offer lower initial costs but create complex webs of dependencies that become expensive to maintain and modify.

Modern integration architecture prioritizes abstraction layers over direct connections. Modern TMS platforms from providers like nShift, Transporeon, Alpega, and Cargoson prioritize RESTful APIs with standardized data formats. This approach enables rapid carrier onboarding, simplified compliance reporting, and easier adaptation to regulatory changes.

For enterprises managing significant carrier portfolios, the maintenance mathematics become clear. Per-carrier integration costs accumulate faster than orchestration layer investments, particularly when you factor in the operational disruption costs of point-to-point failures.

Operational Playbooks for Common Failure Scenarios

Authentication and Credential Management Failures

Set up automated credential monitoring that checks token expiry dates at least 48 hours before expiration. Multiple authentication methods provide resilience. Store backup API keys, OAuth tokens, and EDI credentials separately. Build rotation procedures that don't require production downtime.

Implement credential rotation testing in non-production environments first. You need token refresh logic, proper scope management, and error handling for authentication failures that can cascade across your entire shipping workflow.

API Rate Limiting and Timeout Issues

Monitor rate limit consumption proactively, not reactively. API-first organizations recover from API failures faster, often within an hour, partly because they monitor rate limit consumption proactively. Set alerts at 70% of your allocated quota rather than waiting for 429 responses.

Build intelligent queuing systems that can distribute requests across time windows and multiple authentication contexts. Cargoson, along with competitors like MercuryGate and BluJay, built abstraction layers that handle the OAuth complexity, implement intelligent rate limiting queues, and provide fallback mechanisms when USPS quotas are exceeded.

Data Mapping and Transformation Errors

Test data mapping with real production data, not sanitized test datasets. Test order creation flows using real purchase order data from your ERP system. Don't use sanitized test data that masks field mapping issues. Copy production orders from your top 10 customers and validate that all required fields populate correctly in carrier API calls.

Build validation layers that catch mapping errors before they reach carrier APIs. Failed field validation should trigger fallback procedures, not cascade through to customer-facing systems.

Building a Carrier Integration Incident Response Team

Successful incident response requires clear roles, defined escalation procedures, and decision frameworks that work when systems fail. Define primary and secondary contacts for each carrier integration, with specific expertise areas mapped to team members.

Create decision trees for different failure types. Authentication failures follow different escalation paths than rate limiting issues. Network connectivity problems require different expertise than data mapping errors. Your incident response team needs playbooks that route problems to the right people with the right tools.

Establish vendor communication protocols and SLA management procedures. Know which carrier support channels provide the fastest response for production issues. Understand escalation procedures within carrier organizations and maintain direct contacts when possible.

Multi-carrier platforms like Cargoson, EasyPost, nShift, and ShipEngine handle this complexity through abstraction layers. Vendor-agnostic monitoring becomes crucial when managing platforms like EasyPost, nShift, and Cargoson simultaneously. Your incident response procedures need to account for platform-specific monitoring requirements and vendor-specific escalation procedures.

Long-term Strategic Improvements

The integration strategy decisions you make today determine your operational flexibility through 2027 and beyond. API-first integration strategies provide superior flexibility for European operations. Modern TMS platforms from providers like nShift, Transporeon, Alpega, and Cargoson prioritize RESTful APIs with standardized data formats.

Budget for the complete cost of integration resilience, not just the initial implementation. Budget for emergency carrier onboarding fees, spot rate premiums when contracted carriers can't deliver, and expedited integration costs for backup providers. Plan for 15-20% budget increases in 2026-2027 if reactive, or 8-12% if proactive with proper contract protection.

ROI evaluation must include operational resilience metrics. Track operational improvements like reduced manual data entry, faster carrier onboarding, improved compliance reporting accuracy, and enhanced visibility across your transport network. European operations often see 15-25% improvements in transport administrative efficiency within the first year of successful TMS data integration.

The companies that thrive through 2026's API integration challenges will be those that treated connectivity as infrastructure, not features. They invested in systems that fail gracefully, recover quickly, and adapt to changing requirements without requiring complete re-implementation.

Your 2-hour emergency protocol prevents 90% of integration disasters not because it's perfect, but because it's systematic, tested, and ready when Tuesday morning turns chaotic. The question isn't whether your next carrier API integration will fail — it's whether you'll be ready when it does.